Introduction to Apache Spark

As officially defined, Apache Spark can be briefly described in a single sentence as:

Apache Spark™ is a multi-language engine for executing data engineering, data science, and machine learning tasks on both single-node machines and clusters.

Apache Spark Official Site

Under this definition lies one of the most active Apache projects within its community of users and developers. Without a doubt, Spark has become the most widely used scalable computing engine in the world today.

Its massive adoption has come hand in hand with some features that make it beneficial for use in multiple scenarios:

- Ability to process both batch and streaming processes.

- Support for multiple languages: Java, Scala, Python, and R.

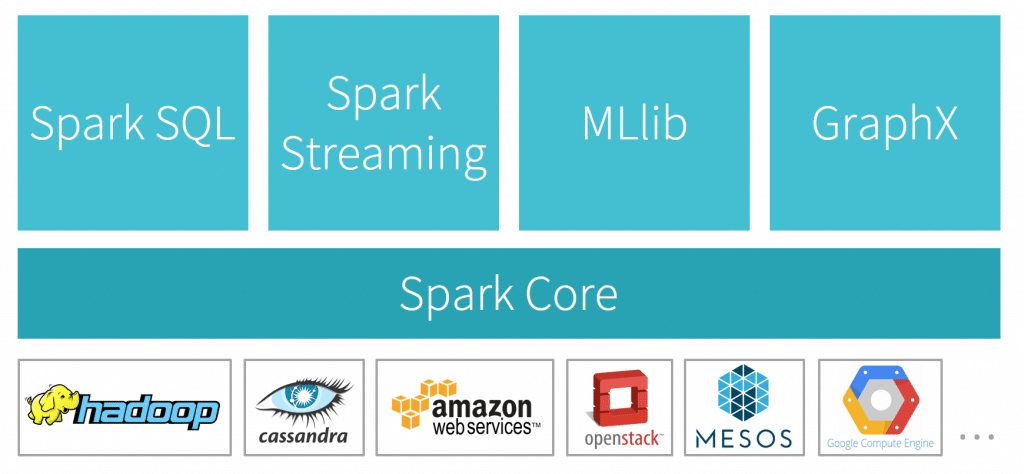

- Libraries that facilitate the development of specific applications, such as Spark SQL and MLib.

With this brief description, it can be concluded that there are many environments where the use of Spark would be beneficial, but is it worth integrating in all cases?

Integration

In general, there are two motivations for undertaking the integration of Apache Spark into a corporate Data Warehouse:

- Need for performance improvement.

- Need for advanced data analysis techniques.

In both cases, it can be stated that the usefulness of integrating Spark will be associated with the volume of data managed in the Data Warehouse. That is, the more information managed, the greater the benefit obtained. For small volumes, the application of simpler solutions can provide the expected benefits, with less complication in the DW architecture.

In the event that our Data Warehouse contains a large volume of information, an integration of Apache Spark can be undertaken, which will surely bring benefits. In the following sections, the two most common alternatives for these integration tasks are presented:

- Integrate Spark into an existing Data Warehouse.

- Design a new architecture for the Data Warehouse.

Integrate Apache Spark into an existing DW

This option entails minimizing both effort and risk, so that benefits in system exploitation can be achieved in a short period of time. The ease of integration is partly due to the wide range of products that have direct support for Apache Spark, including:

- Data Science and Machine Learning: Scikit Learn, pandas, TensorFlow, PyTorch,…

- SQL y BI Analysis: Power Bi, Tableau, Apache Superset, …

- Storage and infrastructure: MS SQL Server, Cassandra, mongoDB, Kafka, elasticsearch, Apache Airflow, …

However, integrating Spark into a DW designed for conventional exploitation implies that certain advantages may be difficult to achieve. For example, the design of the existing DW may have overlooked storage requirements that Apache Spark needs to run analysis algorithms optimally on multi-node machines.

In short, in these cases, the advantages obtained will depend on the original architecture of the DW.

New design integrating Apache Spark

If, where possible, a new Data Warehouse design is chosen that directly includes the use of Apache Spark, there will be greater possibilities of achieving better performance and results.

Taking into account the “use cases” that the new architecture will accommodate, performance improvements can be obtained by adjusting the configuration of the platform components to the requirements of the machine learning algorithms chosen in the proposed solution. For example, the processing of small batches, typically efficient in Spark, can be separated from complex blocks of information that can be managed with better performance in Hadoop or similar products.

A fundamental aspect that will allow starting from scratch in the design is to include scalability and elasticity of the system as an essential requirement. This will ensure that both simple ETL processes and heavier ML algorithms can be accommodated on the same platform, without falling into poor management of computing resources. With providers that allow dynamically scaling the resources of their DW (Snowflake, Azure SQL DW, etc.), it is easy to maintain low costs without compromising performance.

Conclusion

The scenarios in which Apache Spark can provide performance or analysis capacity advantages to a Data Warehouse are varied and heterogeneous. But it is in the case of systems with a large volume of data where all its benefits can be obtained, especially if the Data Warehouse architecture is designed from the beginning to take into account the requirements specific to Spark.

This post is also available in: