Mathematical Reasoning in LLMs

Large Language Models (LLM) have found a niche in a multitude of tasks related to the analysis and generation of content in different areas of knowledge. In the last decade, the use of LLMs has been expanding from its beginnings mainly associated with text generation and translation, to the current moment where its applications have become numerous.

These models, trained with large volumes of information in text format, learn linguistic and grammatical patterns, which allows them to answer questions, create content, and assist in data analysis tasks. However, since they are based on probabilistic mechanisms, these responses, contents, and analyses may lack precision in areas that require exact, not approximate, solutions, such as in the case of mathematical problems. In this way, the questionable mathematical calculation and reasoning abilities in most models have led to their use in scientific or data processing environments not spreading as rapidly as in tasks based on content creation.

In the following sections, we will attempt to assess the mathematical skills of some of the most commonly used models today and explore the potential for improvement through the utilization of certain strategies.

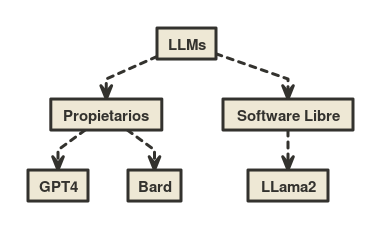

Evaluated Models

In order to assess the mathematical capabilities of widely used LLMs, we have chosen three models that demonstrate high performance in generic reasoning tasks.

Firstly, GPT-4, the latest iteration of the GPT series, known for its deep neural network architecture and its ability to process and generate text with advanced semantic comprehension. Its training, based on extensive datasets, enables it to provide more precise and contextually relevant responses.

Similarly, LLama2 is emerging as a significant innovation in the field, as it distinguishes itself by being distributed under the concept of open-source software. Its most comprehensive version, an LLM with 70 billion parameters, has managed to achieve performance levels comparable to established proprietary models.

Finally, the Bard model stands out for its specialized focus on generating coherent narratives; it is capable of creating texts that not only adhere to grammatical norms but also capture the emotional essence of a narrative. Currently, supporting Bard is the Palm 2 model, which, in its latest version, contributes enhanced mathematical training, among other capabilities.

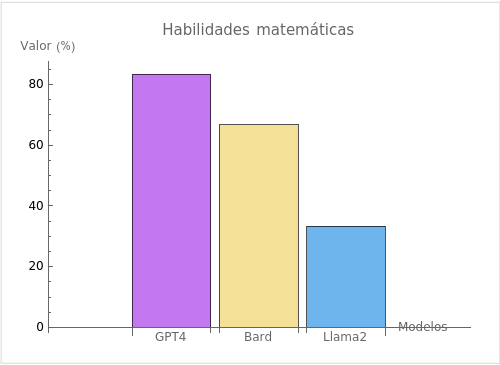

Mathematical Abilities

To assess the overall performance of the different LLMs in a mathematical problem-solving environment, a set of prompts in English covering various mathematical areas is proposed. These prompts have been generated using the “Mathematics Dataset” library, designed to evaluate the mathematical abilities of learning models. The following tests have been conducted without prior context:

| Id | Problem | Prompt | Answer |

|---|---|---|---|

| 1 | Arithmetic Addition and Subtraction | Evaluate -11 – (-21 + 16) – (-1 + 12) | -17 |

| 2 | Prime numbers | Is 18001811 a prime number? | True |

| 3 | Linear Algebra | Solve -388h + 131 = -373h + 416 for h | -19 |

| 4 | Algebra Polynomial Roots | Suppose -5t2 – 72845t – 291300 = 0. Calculate t | -14565, -4 |

| 5 | Differential Calculus | Differentiate -8893y4 + 2y2 + 8545 with respect to y | -35572y3 + 4y |

| 6 | Polynomials | Let k(p) = -31*p + 127. What is k(4)? | 3 |

The results for each of the tests are:

| Id | GPT4 | Bard | LLama2 |

|---|---|---|---|

| 1 | -17 | -17 | 5 |

| 2 | No answer | True | False |

| 3 | -19 | 19 | -19 |

| 4 | -14565, -4 | -783.93, 783.93 | -14569, 0 |

| 5 | −35572y3 + 4y | -35572y3 + 4y | -8893y4 + 2y2 + 854 |

| 6 | 3 | 3 | 3 |

From the previous results, it can be observed that GPT-4 achieves a notable level of accuracy, correctly solving 5 out of the 6 problems proposed, while at the other end, LLama2 only manages to solve 2 out of the 6 problems.

Alternatives and Improvements

Based on the conducted tests, it appears that if one were to seek valid solutions to mathematical problems of any type, none of the analyzed LLMs would be a valid choice, as they were unable to solve all of the test cases. However, alternative approaches have been proposed when formulating the resolution of mathematical prompts that significantly improve the results, for example:

- Use of specific tools: This strategy delegates the mathematical calculation or reasoning to a tool designed for this purpose, with the LLM providing the final response. For instance, GPT-4, when utilizing the WolframAlpha plugin, is capable of correctly solving the one problem in which it initially failed in our tests.

- Code generation: This approach to calculating accurate solutions is based on translating the mathematical problem into code whose execution produces the desired result. This strategy is employed by Bard to tackle the majority of mathematical prompts.

- Voting system: This alternative explores different methods for calculating the solution (e.g., generative response and code execution-based response). To produce the final answer, iterations are made by modifying the prompt until a consensus is reached among the responses given by each of the methods.

These strategies represent some alternatives that enable a realistic use of LLMs in solving mathematical problems, achieving the required precision to address tasks like data analysis, statistics, and more.

Conclusion

While Large Language Models (LLMs) initially did not find widespread application in the field of mathematics, today, evolved models and the application of specific techniques and strategies have made their use in solving mathematical problems a valid option.

If you are considering adopting AI-based solutions for data management in your organization, do not hesitate to contact us.

This post is also available in: